A simple, decade-old hacker trick likely led to the hacking of critical Hillary Clinton staff members. If John Podesta can fall for it, with the Presidential election at stake, so can you. So listen up.

I know I sound like a broken record when I warn people to think before they click, and I know most people think they’ll never fall for silly hacker tricks, but hey, this stuff is important. It very well might have an impact on who gets to be the leader of the free world.

Information continues to trickle out of hacked emails that come from senior officials in Hillary Clinton’s campaign team, including campaign chair John Podesta. This month brought additional evidence describing how it happened.

It was pretty easy.

It appears that Podesta, and hundreds of other Clinton camp workers, received targeted phishing emails telling them they had to change their password immediately. Of course, workers who fell for the email were led to a look-alike page controlled by hackers. Part of the reason the dupe worked involved links that used of URL-shortening service Bitly, which turns long web addresses into short ones for convenience. Bitly also has the terrible quality of completely obscuring where the clicker is actually going until it’s too late. For years, I’ve thought this to be a security flaw inherent in link shorterners, and I believe Bitly and other URL shorteners needed to engineer a fix.

In the meantime, you need to know three critical things:

A) Bitly links can’t be trusted; never click on a Bitly link when anything even remotely sensitive is involved

B) Any plea to urgently change your password should be met with serious skepticism. When you decide to do so, always manually type the service’s address into your web browsers and navigate to its password update page. NEVER click on a link telling you to do so. Even if you are sure it’s legitimate.

C) The presidential election might hang in the balance because of this simple hack. So, yes, anyone can fall for it. You can too.

The Bitly link

Back in June, SecureWorks published a pretty convincing research paper that reconstructed the careful attack on the Hillary Clinton Presidential Campaign. Analyzing data left publicly available on a Bitly account, it found evidence of thousands of spear phishing emails targeting election officials between March and June of this year. The targets included: national political director, finance director, Director of strategic communications, and so on.

For example, 213 links were created targeting 108 email addresses at HillaryClinton.com. The hackers succeeded again and again: “20 of the 213 short links have been clicked as of this publication. Eleven of the links were clicked once, four were clicked twice, two were clicked three times, and two were clicked four times,” the report says.

The group also targeted personal Gmail accounts belonging to campaign officials. This produced plenty of hits, too.

“They include the director of speechwriting for Hillary for America and the deputy director office of the chair at the DNC,” the report says. “(The hackers) created 150 short links targeting this group. As of this publication, 40 of the links have been clicked at least once.”

Clicking on a link does not mean the clicker subsequently entered login information and fell for the scam. But the high click rate certainly suggests some victims did. So does the timing of all this; The DNC hack was revealed in June, weeks after this spear phishing campaign.

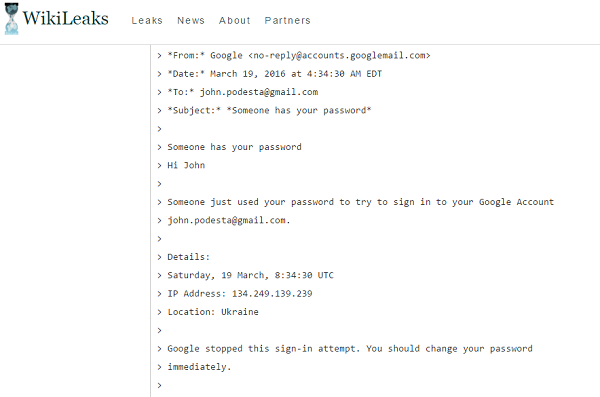

Release last week of what appears to be the actual email that led to the hacking of Podesta’s email on Wikileaks — sorry for the circular reasoning there — seems to confirm SecureWorks’ analysis. An email sent to John.Podesta@gmail.com appears to come from Google and wants that someone located in the Ukraine had tried to access his account.

“Google stopped this sign-in attempt. You should change your password immediately,” it says. “CHANGE PASSWORD.” And there’s a link headed for bit.ly/1PibSU0.

Click on that Bitly link, and you are today brought to a warning page saying there “might be a problem with the requested link.” A bit too late for Podesta and the Clinton campaign.

The ultimate destination for that link appears to be Google, but it’s not. Instead, it sends visitors to a web site at http://myaccount.google.com-securitysettingpage.tk

An IT worker for the Clinton campaign ominously comments in the thread posted at Wikileaks that “this is a legitimate email,” though to his credit, he leaves instructions to visit Google at the correct link to change the password.

Then, ironically, he offers this call to action:

“Does JDP (John Podesta) have the 2 step verification or do we need to do with him on the phone? Don’t want to lock him out of his in box!”

If only a locked inbox were the biggest email problem Podesta had.